It is cheap and easy to build a machine with 8/16 cores and 32GB of RAM. It is more complicated to make Python use all those resources. This blog post will go through strategies to use all the CPU, RAM, and the speed of your storage device.

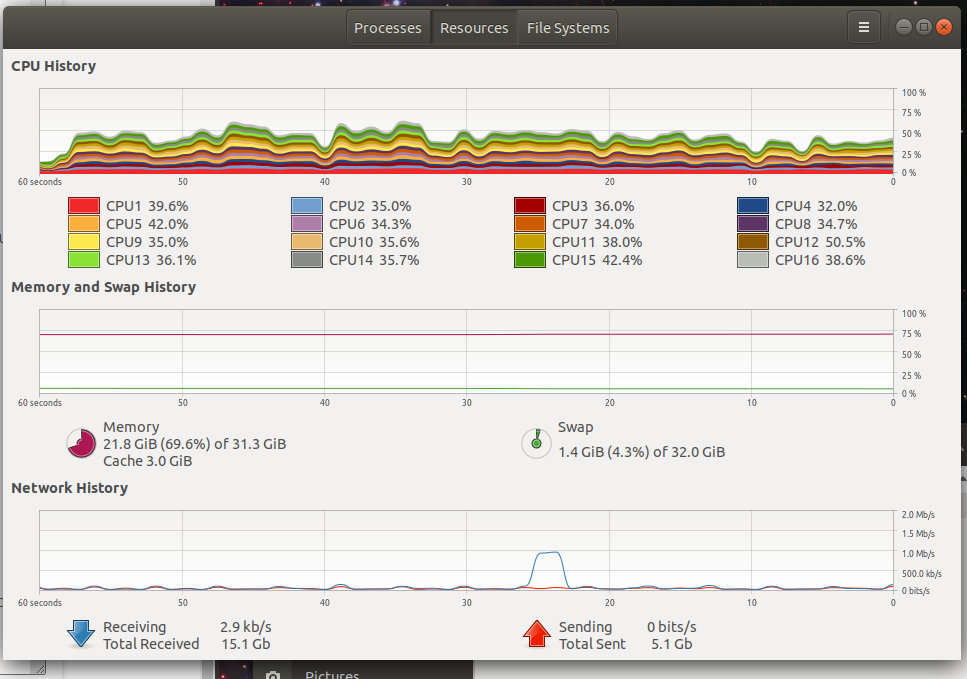

I am using the AMD 3700x from my previous post. It has 8 cores and 16 threads. For this post I will be treating it each thread as a core because that is how Ubuntu displays it in System Monitor.

Looping through a of directory of 4 million images and doing inference on them one by one is slow. Most of time is waiting on system IO. Loading the image from disk into RAM is slow. Transforming the image once it is in RAM is very fast and making an inference with the GPU is also fast. In that case Python will only be using 1/16th of the available total processing power and only that single images will be stored in RAM. Using a SSD or NVME device instead of a traditional hard drive does speed it up, but not enough.

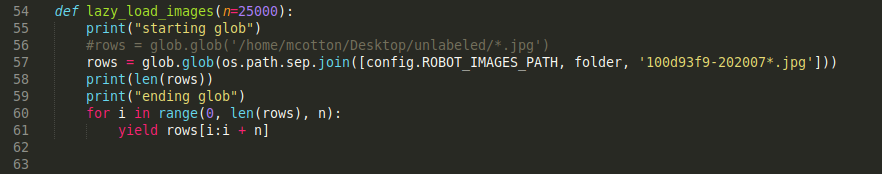

Loading images into RAM is great but you will run out at some point so it is best to lazy load them. In this case I wrote a quick generator function that takes an argument of the batch size it should load.

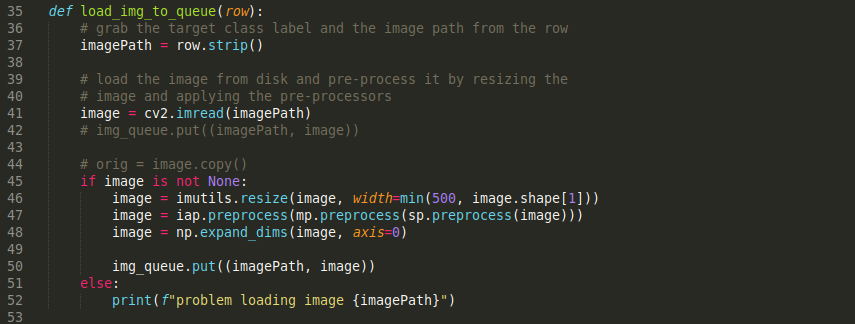

Dealing with a batch of images is better than loading them individually but they still need to be pre-processed by the CPU and placed in a queue. This is slow when the CPU is only apple to use 1/16th of its abilities.

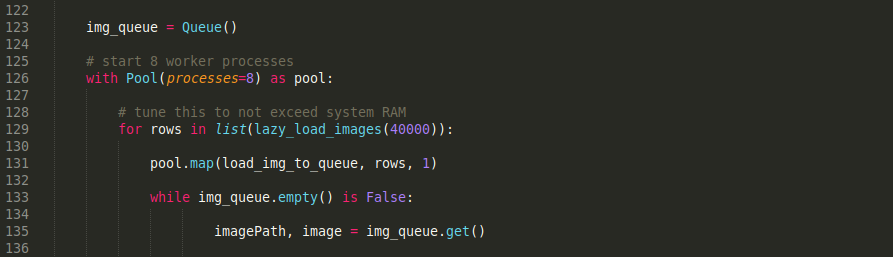

Using the included multiprocessing package you can easily create a bunch of processes and use a queue to shuffle data between them. It also includes the ability to create a pool of processes to make it even more straight forward.

In my own testing, my HDD was still slowing down the system because it wasn't able to keep all of the CPU processes busy. I was only able to utilize ~75% of my CPU when loading from a 7200RPM HDD. For testing purposes I loaded up a batch on my NVME drive and it easily exceed the CPUs ability to process them. Only having a single NVME drive I will need to wait for prices to come down before I can convert all of my storage over to ultra-fast flash.

Using the above code you can easily max out your RAM and CPU. Doing this for batches of images means that there is always a supply of images in RAM for the GPU to consume. It also means that going through those 4 million images won't take longer than needed. Next challenge is to speed up GPU inference.